13 Jan Harmonic Convergence of Light

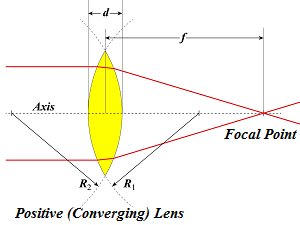

Light waves diverge and converge and bend on their journey to places where they are perceived. We choose to focus, perceived light waves enter us through the portals of our eyes, then flow through the visual cortex and resonate in the brain until they trigger recognition, often very quickly. The illustration shows a lens that is shaped like the lens of the human eye. The red lines represent the bending and convergence process described. Then what happens? Perception and learning are the main subjects of this section of Understanding Context, and visual processing is one of the major fields of play. This is because vision is the most exercised sense of AI. You know what they say: “An eye for AI” (or was it “Aye-eye for AI?”). This post, and the section as a whole examine the physiology of perception and bring up important details that support the narrow academic objectives of the author. If my arguments don’t convince you to see things my way, your case is probably hopeless. 😉

Light waves diverge and converge and bend on their journey to places where they are perceived. We choose to focus, perceived light waves enter us through the portals of our eyes, then flow through the visual cortex and resonate in the brain until they trigger recognition, often very quickly. The illustration shows a lens that is shaped like the lens of the human eye. The red lines represent the bending and convergence process described. Then what happens? Perception and learning are the main subjects of this section of Understanding Context, and visual processing is one of the major fields of play. This is because vision is the most exercised sense of AI. You know what they say: “An eye for AI” (or was it “Aye-eye for AI?”). This post, and the section as a whole examine the physiology of perception and bring up important details that support the narrow academic objectives of the author. If my arguments don’t convince you to see things my way, your case is probably hopeless. 😉

| Understanding Context Cross-Reference |

|---|

| Click on these Links to other posts and glossary/bibliography references |

|

|

|

| Prior Post | Next Post |

| The Pedantic Querulous Shrinking Violet | Visual Input Processing |

| Definitions | References |

| perception learning | harmonic convergence |

| visual processing | Hubel 1988 |

| cognitive science kinesthesia | Ballard 1982 |

Pioneering neural network R&D has been built around image recognition. I will look at some relevant projects that help show the roles of perception in cognition. Functional similarities and differences between perception and other cognitive tasks, and the implications of the comparison/contrast, are discussed. The results of research in the physiology and psychology of vision are also presented, along with computational modeling. I am attempting to build a strong case and set the stage for a harmonic convergence in “The End of Code“.

Pioneering neural network R&D has been built around image recognition. I will look at some relevant projects that help show the roles of perception in cognition. Functional similarities and differences between perception and other cognitive tasks, and the implications of the comparison/contrast, are discussed. The results of research in the physiology and psychology of vision are also presented, along with computational modeling. I am attempting to build a strong case and set the stage for a harmonic convergence in “The End of Code“.

Would it be kosher to tell you the conclusion reached in this section right now? Here it is, kosher or not: Cognition follows perception. Our ability to learn abstract concepts is wholly dependent on our understanding of concrete facts about the world around us. These facts can only be learned through perception. In short… I perceive, thereafter I think, and from thence, I can relate.

Each of the senses is a willing contributor to this process, as are extra-sensory capabilities such as proprioception. I also argue that emotions are necessary for complex cognitive tasks such as language understanding. But that will be a topic for another post. As the “perception” part of the formula includes complex social interactions, many including language, humans are capable of moving quickly from physical perception to abstract reasoning. We automatically think about other people’s intent and about our own place in the social hierarchy. This abstract capability makes the picture and the formula (I perceive, thereafter I think, and from thence, I can relate) seem like absurd reductions or simplifications. Perhaps they are. But, from a modeling perspective, I need to have a set of dependent processes I can teach the computer to perform, and my base assumption is that the computer is going to act on perceived changes in the environment, thus it all begins with perception.

The Mind and the Brain

A bunch of my posts examine the brain, taking a few small side trips into other parts of the anatomy. We know a great deal about how the brain works, but our knowledge is far from complete. From a physiological standpoint we are nowhere near duplicating what we know in the form of a thinking machine – a mechanical brain. Nonetheless, as we enter the realm of cognitive science, we want to broaden the perspective, moving from thoughts of a mechanical brain to the possibility of a mechanical mind. What would be the difference? No new scientific evidence suggests particles beyond the visible range of microscopy or a hidden organization within the brain that proves a new way of processing information.

A bunch of my posts examine the brain, taking a few small side trips into other parts of the anatomy. We know a great deal about how the brain works, but our knowledge is far from complete. From a physiological standpoint we are nowhere near duplicating what we know in the form of a thinking machine – a mechanical brain. Nonetheless, as we enter the realm of cognitive science, we want to broaden the perspective, moving from thoughts of a mechanical brain to the possibility of a mechanical mind. What would be the difference? No new scientific evidence suggests particles beyond the visible range of microscopy or a hidden organization within the brain that proves a new way of processing information.

It simply seems to us, as it has to so many others, that the mind is wider than the brain. The mind is incomplete if it does not interact with the body’s other physiological systems. Consider how our hearts respond to emotional and kinesthetic stimuli. Our digestive systems signal hunger, and that hunger motivates us to action. Even our skin gets into the act, generating goose bumps when we are frightened. These are just a few of many obvious, everyday examples that underscore what we already know about our own selves – the mind encompasses more than the brain. Perception is the beginning of these phenomena.

My planned posts in this section will attempt to demonstrate that we perceive, thereafter we think, and from thence, we can relate. This sequence is an important part of the model, as are processes that happen in parallel. If this is indeed the case, we must learn about perception before we can fully understand cognition, which is the subject of my posts the next section.

| Click below to look in each Understanding Context section |

|---|